Navigation and useful materials

In honor of MisinfoCon this weekend, it’s time for a brain dump on propaganda — that is, getting large numbers of people to believe something for political gain. Many of my journalist and technologist colleagues have started to think about propaganda in the wake of the US election, and related issues like “fake news” and organized trolling. My goal here is to connect this new wave of enthusiasm to history and research.

This post is about persuasion. I’m not going to spend much time on the ethics of these techniques, and even less on the question of who is actually right on any particular point. That’s for another conversation. Instead, I want to talk about what works. All of these methods are just tools, and some are more just than others. Think of this as Defense Against the Dark Arts.

Let’s start with the nation states. Modern intelligence services have been involved in propaganda for a very long time and they have many names for it: information warfare, political influence operations, disinformation, psyops. Whatever you want to call it, it pays to study the masters.

Russia has a long history of organized disinformation, and their methods have evolved for the Internet era. The modern strategy has been dubbed “the firehose of falsehood” by RAND scholar Christopher Paul.

His recent report discusses this technique of pushing out diverse messages on a huge number of different channels, everything from obvious state sources like Russia Today to carefully obscured leaks of hacked material — leaks which are tailored to appeal to sympathetic journalists.

The experimental psychology literature suggests that, all other things being equal, messages received in greater volume and from more sources will be more persuasive. Quantity does indeed have a quality all its own. High volume can deliver other benefits that are relevant in the Russian propaganda context. First, high volume can consume the attention and other available bandwidth of potential audiences, drowning out competing messages. Second, high volume can overwhelm competing messages in a flood of disagreement. Third, multiple channels increase the chances that target audiences are exposed to the message. Fourth, receiving a message via multiple modes and from multiple sources increases the message’s perceived credibility, especially if a disseminating source is one with which an audience member identifies.

And as you might expect, there is a certain amount of outright fabrication — often mixed with the truth:

Contemporary Russian propaganda makes little or no commitment to the truth. This is not to say that all of it is false. Quite the contrary: It often contains a significant fraction of the truth. Sometimes, however, events reported in Russian propaganda are wholly manufactured, like the 2014 social media campaign to create panic about an explosion and chemical plume in St. Mary’s Parish, Louisiana, that never happened. Russian propaganda has relied on manufactured evidence—often photographic. … In addition to manufacturing information, Russian propagandists often manufacture sources.

But for me, the most surprising conclusion of this work is that a source can still be credible even if it repeatedly and blatantly contradicts itself:

Potential losses in credibility due to inconsistency are potentially offset by synergies with other characteristics of contemporary propaganda. As noted earlier in the discussion of multiple channels, the presentation of multiple arguments by multiple sources is more persuasive than either the presentation of multiple arguments by one source or the presentation of one argument by multiple sources. These losses can also be offset by peripheral cues that enforce perceptions of credibility, trustworthiness, or legitimacy. Even if a channel or individual propagandist changes accounts of events from one day to the next, viewers are likely to evaluate the credibility of the new account without giving too much weight to the prior, “mistaken” account, provided that there are peripheral cues suggesting the source is credible.

Orwell was right: “We have always been at war with Eastasia” really does work, if there are enough people repeating it.

Paul suggests that the counter-strategy is not to try to refute the message, but to reach the target audience first with an alternative. Fact checking, which is really after-the-fact-checking, may not be the most effective plan. He suggests instead that we “forewarn audiences of misinformation, or merely reach them first with the truth, rather than retracting or refuting false ‘facts.’” In this light, Facebook’s plan to show the fact check along with the article seems like a much better strategy than sending someone a fact checking link when they repeat a falsehood.

He also suggests that we “focus on guiding the propaganda’s target audience in more productive directions.” Which is exactly what China does.

China is famous for its highly developed network censorship, from the Great Firewall to its carefully policed social media. The role of the government “public opinion guides,” China’s millions of paid commenters, has been murkier — until now.

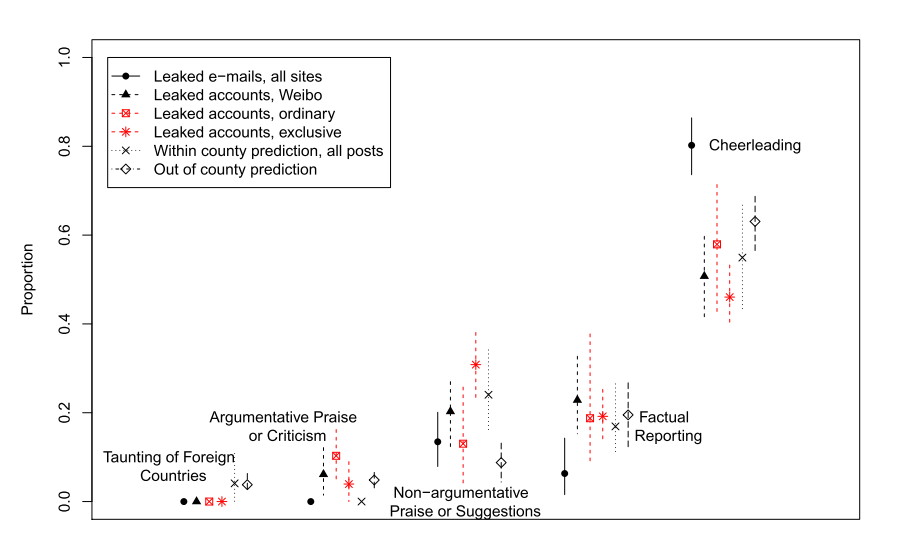

The Atlantic has a readable summary of recent research by Gary King, Jennifer Pan, and Margaret E. Roberts. They started with thousands of leaked Chinese government emails where commentators report on their work, which became the raw data for an accurate predictive model of which posts are government PR. A surprising twist: nearly 60% of paid commenters will just tell you they’re posting for the government when you ask them, which allowed these scholars to verify their country-wide model. But the core of the analysis is what these posters were doing.

From the paper:

We estimate that the government fabricates and posts about 448 million social media comments a year. In contrast to prior claims, we show that the Chinese regime’s strategy is to avoid arguing with skeptics of the party and the government, and to not even discuss controversial issues. We infer that the goal of this massive secretive operation is instead to regularly distract the public and change the subject, as most of the these posts involve cheerleading for China, the revolutionary history of the Communist Party, or other symbols of the regime.

And here’s the breakdown of what these posters were doing. “Cheerleading” dominates for every sample of government accounts. Arguments are rare.

Note that this is only one half of the Chinese media control strategy. There is still massive censorship of political expression, especially of any post relating to organized protest, which is empirically good at toppling governments.

All of this without ever getting into an argument. This suggests that there is actually no need to engage the critics/trolls to get your message out (though it might still be worthwhile to distract and monitor them.) Just communicate positive messages to the masses while you quietly disable your detractors. A counter-strategy, if you are facing this type of opponent, is organized, visible resistance. Get into the streets and make it impossible to talk about something else — though note that recent experiments suggest that violent or extreme protest tactics will backfire.

But China has a tightly controlled media and the greatest censorship regime the world has ever seen. If you’re operating in a relatively free media environment, you have to manipulate the press instead.

The most insightful thing I have ever read about the wonder that was Milo Yiannopoulos comes from the man who wrote a book on manipulating the media, documenting the strategies he devised to market people like Tucker Max. Ryan Holiday writes,

We encouraged protests at colleges by sending outraged emails to various activist groups and clubs on campuses where the movie was being screened. We sent fake tips to Gawker, which dutifully ate them up. We created a boycott group on Facebook that acquired thousands of members. We made deliberately offensive ads and ran them on websites where they would be written about by controversy-loving reporters. After I began vandalizing some of our own billboards in Los Angeles, the trend spread across the country, with parties of feminists roving the streets of New York to deface them (with the Village Voice in tow).

But my favorite was the campaign in Chicago—the only major city where we could afford transit advertising. After placing a series of offensive ads on buses and the metro, from my office I alternated between calling in angry complaints to the Chicago CTA and sending angry emails to city officials with reporters cc’d, until ‘under pressure,’ they announced that they would be banning our advertisements and returning our money. Then we put out a press release denouncing this cowardly decision.

I’ve never seen so much publicity. It was madness.

….

The key tactic of alternative or provocative figures is to leverage the size and platform of their “not-audience” (i.e. their haters in the mainstream) to attract attention and build an actual audience. Let’s say 9 out of 10 people who hear something Milo says will find it repulsive and juvenile. Because of that response rate, it’s going to be hard for someone like Milo to market himself through traditional channels. His potential audience is too spread out, and doesn’t have that much in common. He can’t advertise, he can’t find them one by one. It’s just not going to scale.

But let’s say he can acquire massive amounts of negative publicity by pissing off people in the media? Well now all of a sudden someone is absorbing the cost of this inefficient form of marketing for him.

(Emphasis mine.) That one’s adversaries should be denied attention is not a new idea. Indeed, this is central to the “no-platforming” tactic. But no-platforming plays right into an outrage-based strategy if it results in additional attention (see also the Streisand effect). Worse, all the incentives for media makers are wrong. It’s going to be very hard for journalists and other media figures to wean themselves off of outrage, because strong emotional reactions get people to share information (1, 2, 3, etc.) and information sharing has become the basis of distribution, which is the basis of revenue. We are in dire need of new business models for news.

But this breakdown of the mechanics of outrage marketing does suggest a counter-strategy: before you get mad, or report on someone getting mad, do your homework. Holiday called to complain about his own content, put out false press releases, etc. A smart journalist might be able to uncover this deception. In a propaganda war, all journalists should be investigative journalists.

Attention is the currency of networked propaganda. Attention is the key. Be very careful who you give it to, and understand how your own emotions and incentives can be exploited.

But even if you’ve uncovered a deception, it’s not enough to say that someone else is lying. You have to tell a different story.

Telling people that something they’ve heard is wrong may be one of the most pointless things you can do. A long series of experiments shows that it rarely changes belief. Brendan Nyhan is one of the main scholars here, with a series of papers on political misinformation. This is about human psychology; we simply don’t process information rationally, but instead employ a variety of heuristics and cognitive shortcuts (not necessarily maladaptive in general) that can be exploited. The classic experiment goes like this:

Participants in a study within this paradigm are told that there was a fire in a warehouse and that there were flammable chemicals in the warehouse that were improperly stored. When hearing these pieces of information in succession, people typically make a causal link between the two facts and infer that the fire was caused in some way by the flammable chemicals. Some subjects are then told that there were no flammable chemicals in the warehouse. Subjects who have received this corrective information may correctly answer that there were no flammable chemicals in the warehouse and separately incorrectly answer that flammable chemicals caused the fire. This seeming contradiction can be explained by the fact that people update the factual information about the presence of flammable chemicals without also updating the causal inferences that followed from the incorrect information they initially received.

Worse, repeating a lie in the process of refuting it may actually reinforce it! The counter strategy is to replace one narrative with another. Affirm, don’t deny:

Which of these headlines strikes you as the most persuasive:

- “I am not a Muslim, Obama says.”

- “I am a Christian, Obama says.”

The first headline is a direct and unequivocal denial of a piece of misinformation that’s had a frustratingly long life. It’s Obama directly addressing the falsehood.

The second option takes a different approach by affirming Obama’s true religion, rather than denying the incorrect one. He’s asserting, not correcting.

Which one is better at convincing people of Obama’s religion? According to recent research into political misinformation, it’s likely the latter.

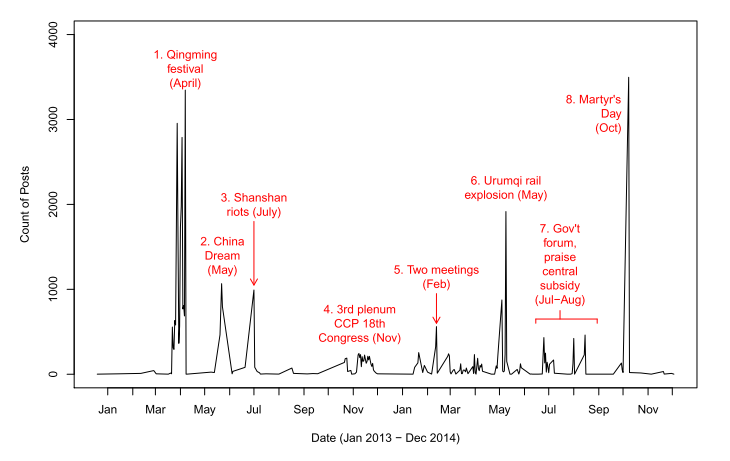

Let’s return to China for a moment. Here’s a chart, from the paper above, on the number of government social media postings over time:

Posts spiked around political events (CCP Congress) and emergencies that the government would rather citizens not talk about, such as riots and a rail explosion. This “cheerleading” propaganda wasn’t simply a regular diet of good news, but a precisely controlled strategy designed to drown out undesirable narratives.

One of the problems of a free press is that “the media” is a herd of cats. There really is no central authority — independence and diversity, huzzah! Similarly, distributed protest movements like Anonymous can be very effective for certain types of activities. But even Anonymous had central figures planning operations.

The most successful propagandists, like the most successful protest movements, are very organized. (Lost in the current “diversity of tactics” rhetoric is the historical fact that key battles in the civil rights movement were carefully planned.) Organization and planning requires intelligence. You have to know who your adversaries are and what they are doing. Intelligence involves basic steps like:

- Pay attention to the details of every encounter. Who wrote that story or posted that comment?

- Research the actors and their networks. Who are they connected to? What communication channels do they use to coordinate? Who directs operations?

- Real-time monitoring. When a misinformation campaign begins, you need to get to your audience before they do (with something more than just a debunk, as above.)

Although there may be useful technological approaches to tracing networks, there is no magic here; anyone can keep a spreadsheet of actors, you can do real-time monitoring with little more than Tweetdeck, and investigative journalists already know how to investigate. But centralization may be important. The Russian approach of “many messages, many channels” suggests that an open, diverse network can succeed at individual propaganda actions, and I bet it would succeed at counter-propaganda actions too. But intelligence is different, and it’s an unanswered question whether the messy collection of journalists, NGOs, universities, and activists in a free society can do effective counter-propaganda intelligence, or even agree sufficiently on what that would be. I don’t think a distributed approach will work here; someone needs to own the database and run the show.

Update: The East StratCom Task Force seems to be exactly this sort of centralized actor for the EU.

But one way or another, you have know what your propagandist adversary is doing, in detail and in real-time. If you don’t have that critical function taken care of, you’re going to be forever reactive, which means you’re probably going to lose.

PS: Up your security game

Hacking and leaking — which is one of the more effective ways to dox someone — has become a propaganda tactic. If you don’t want to be on the wrong end of this, I recommend immediately doing the following easy things:

- Enable 2-step logins on your email and other important accounts.

- Learn to recognize phishing.

I suspect this would prevent 70%-90% of hacking and doxxing attempts. It would have saved John Podesta. Here’s lots more on easy ways to protect yourself.

If you have found a spelling error, please, notify us by selecting that text and pressing Ctrl+Enter.